It’s a bit cliche to say that the hardest thing about PA school is how much we have to learn. I mean it’s true, but doesn’t say much.

I’ve mentioned in the past how one of the biggest obstacles I had to overcome to simply even be considered to admissions, let alone be admitted, was my undergrad GPA of over 35 years ago. And I get it. If you’re attending a grad program, really any grad program, right after your undergraduate degree, your undergraduate GPA is fairly indicative of how you’ll perform in grad school. And the truth is, 35 years ago, I would have failed out of grad school. But I’m not the person I was 35 years ago.

One of the things I’ve had to do a lot of over the past 8 months or so is adapt my learning style as I go. I have to figure out what has been working and what hasn’t been working. I spoke in my previous post about how tough I find pharm. The first piece of good news is that I passed that exam. Not by much. But I passed. However, I did something I probably would not have done in my undergrad days: I set up a meeting with the course coordinator for pharm to discuss how I should approach things. So I’m already a better student than I was 35 years ago.

For me, the hardest part about pharmacology is that it’s really mostly rote memorization. There are times where the suffix portion of a drug name can help clue you in (e.g. -olol is what we call a betablocker and used for HTN) but not always. Propranolol is a betablocker we use for HTN, but also for Essential Tremors! In any case, rote memorization doesn’t come as easily as it did to me 35 years ago.

My professor gave me some advice, which honestly we had been told before, but this time in a more concrete fashion. See, I tend to make a lot of flashcards on 3″x5″ flashcards. I’ve made a few thousand by now. And they help. But she suggested I keep them briefer and more succinct and make more. She also suggested I keep them simpler. I had been making them far too complex. It sounds like a simple change, but I think it’ll make a difference (we’ll see in a few weeks after my next pharm exam).

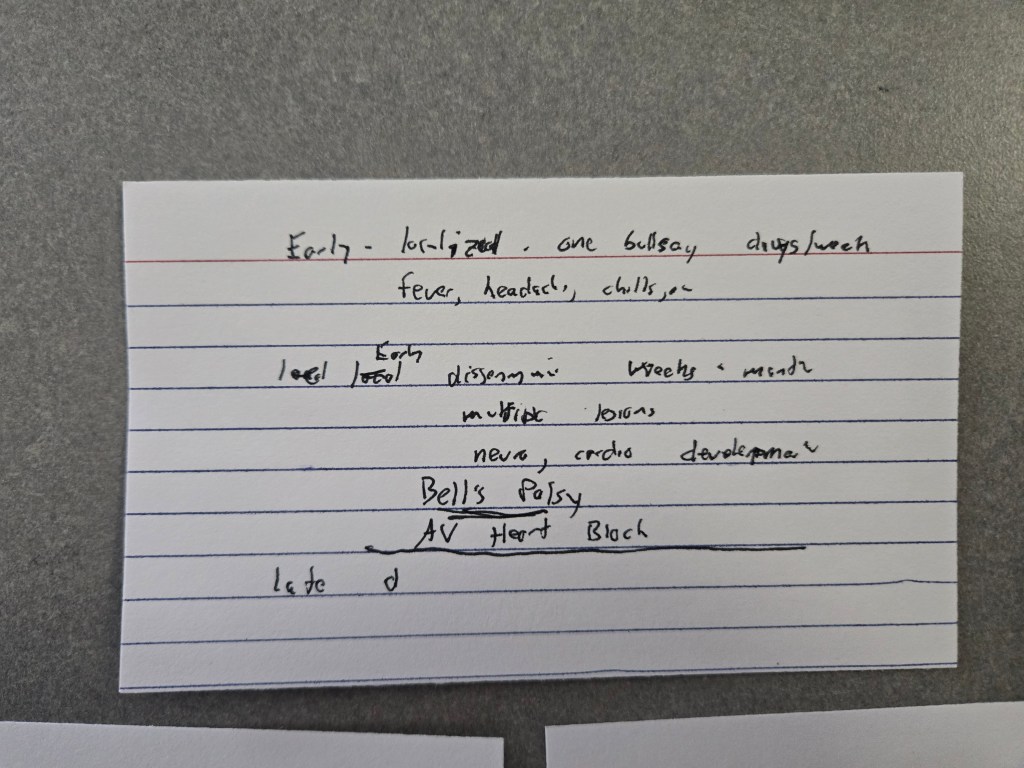

That said, I caught myself today making a flashcard on Lyme Disease stages and I realized I was cramming more and more details onto it. I stopped. I realized I was falling back on my old ways. So how does this related to programming?

I was suddenly reminded of when I was hired for a programming job to work on a project using Visual Basic and Visual C#. Both are what programmers call “object-oriented” languages. I had grown up on more procedural languages. I really had very little experience programming in an object-oriented language. In fact I told them that during my interview. Their response was “we want you anyway. So I quickly came up to speed and started to think like an object-oriented programmer. And for this project, this was actually a great paradigm. The program helped engineers design and specify the parts for a particular type of physical object.

But every once in awhile I’d find myself facing particular programming challenge and finding the code hard to write and very complex when trying to solve the problem. And then it would suddenly dawn on me, I had stopped thinking in terms of objects and was trying to think like a procedural programmer. Once I went back and approached the problem from an object-oriented paradigm, often the solution would pop out very quickly and the code would be shorter and clearer.

It took a paradigm shift. So I simplified the first card greatly.

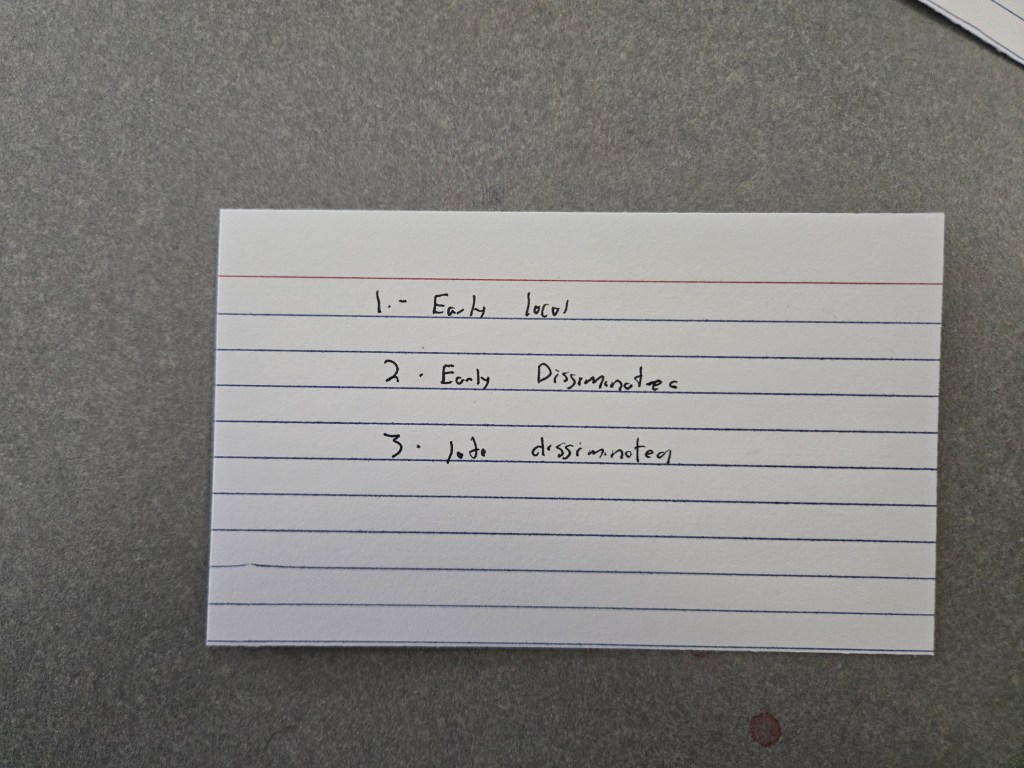

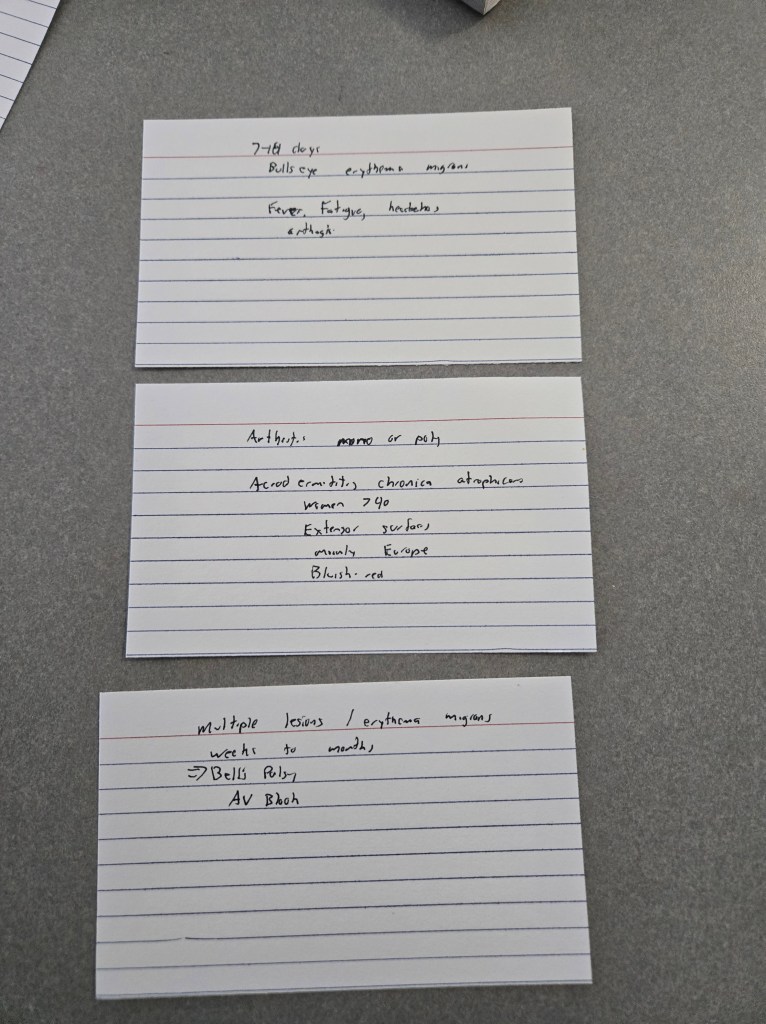

And then made three separate cards

This helps in two ways. For example, rather than have to remember every detail on the overly complex card and perhaps confuse them, I can focus on the individual stages of Lyme. If I forget one, I’m only impacted in that area at test time.

But also, I can use the cards “forwards and backwards”. i.e. I can look at the front (which states the stage) and work on recalling the signs and symptoms for that stage. Or, I can look at the back and try to recall what stage it is. It reinforces the memorization process AND means that if they ask a question in either direction, I’m more likely to get the right answer.

Does this work for everything? No. But I’m already liking it for some things.

Will it work? We’ll see.

But the point is, for me to get better at pharm (though ironically this is for a medicine lecture) I need to make a paradigm shift in how I study. Wish me luck.