I still recall the first computer program I wrote. Or rather co-wrote. It was a rather simple program, in Fortran I believe, though that’s really an educated guess. I don’t have a copy of it. It was either in 7th or 8th grade when several of us were given an opportunity to go down to the local high-school and learn a bit about the computer that they had there. I honestly have NO idea what kind of computer it was, perhaps a PDP-9 or PDP-11. We were asked for ideas on what to program and the instructor quickly ruled out our suggestion of printing all numbers from 1 to 1 Million. He made us estimate how much paper that would take.

So instead we wrote a program to convert temperature Fahrenheit to Celsius. The program was as I recall a few feet long. “A few feet long? What are you talking about Greg?” No, this was not the printout. This wasn’t how much it scrolled on the screen. Instead it was the length of the yellow (as I recall) paper tape that contained it. The paper tape had holes punched into it that could be read by a reader. You’d write your program on one machine, and then take it over to the computer and feed it into the reader and it would run it. I honestly don’t recall how we entered the values to be converted, if it was already on the tape or through some other interface. In any case, I loved it and fell in love with computers then. Unfortunately, somewhere over the years, that paper tape has since disappeared. That saddens me. It’s a memento I wish I still had it.

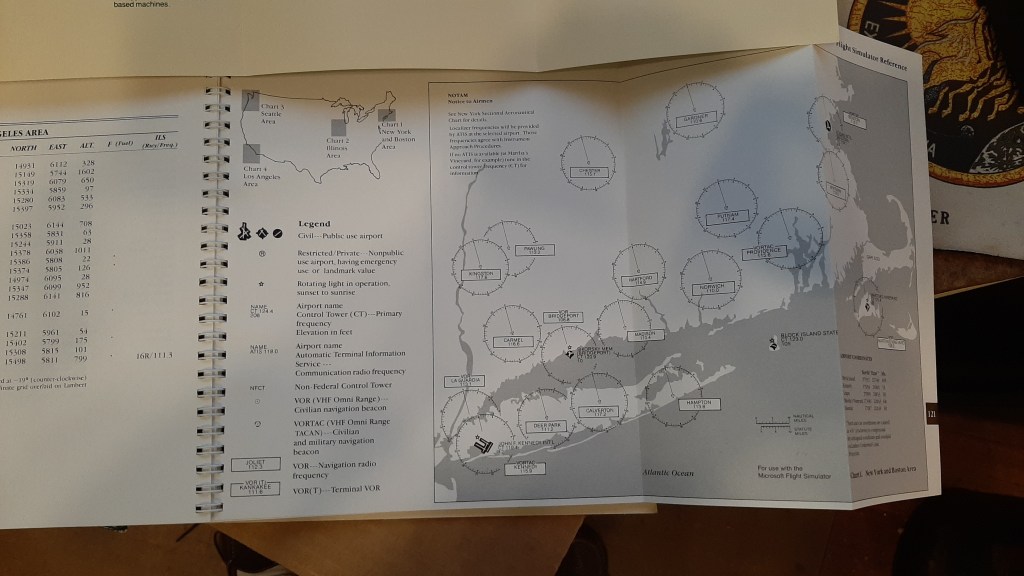

In four or five short years, the world was changing and quickly. The IBM PC had been released while I was in high school and I went from playing a text adventure game called CIA on a TRS-80 Model II to programming in UCSD Pascal on an original IBM PC. (I should note that this was my first encounter with the concept of a virtual machine and p-code machine.) This was great, but I still wanted more. Somewhere along the line I encountered a copy of Microsoft’s Flight Simulator. I loved it. In January of 1985 my dad took me on a vacation to St. Croix USVI. Our first step on that trip was a night in NYC before we caught our flight the next morning. To kill some time I stepped into 47th Street Photo and bought myself a copy of Flight Simulator. It was the first software I ever bought with my own money. (My best friend Peter Goodrich and I had previously acquired a legal copy of DOS 2.0, but “shared” it. Ok, not entirely legal, but hey, we were young.)

I still have the receipt.

For a High School Student in the 80s, this wasn’t cheap. But it was worth it!

I was reminded of this the other day when talking with some old buddies that I had met when the Usenet sci.space.policy was still the place to go for the latest and greatest discussions on space programs. We were discussing our early intro to computers and the like.

I haven’t played this version in years, and honestly, am not entirely sure I have the hardware any more that could. For one thing, this version as I recall was designed around the 4.77Mhz speed of the original IBM PC. This is one reason that some of my readers may recall when the PC AT clones came out running the 80286 chip running at up to 8Mhz (and faster for some clones) there was often a switch to run the CPU at a slower speed because many games otherwise simply ran twice as fast and as a result the users couldn’t react fast enough. So even if I could find a 5 1/4″ floppy and get my current machine to read the drives in a VM, I’m not sure I could clock down a VM slow enough to play this. But, I may have to do this one of these days. Just for the fun of it.

I still have the original disks and documentation that came with it.

A part of me does wonder if this is worth anything more than the memories. But for now, it remains in my collection; along with an original copy of MapInfo that was gifted to me by one of the founders. But that’s a stroll down memory lane for another day.

And then I encountered SQL Server only a short 6 or so years later. And that ultimately has been a big part of where I am today.