So, another SQL Saturday Albany is in the books. First, I want to thank Ed Pollack and his crew for doing a great job with a changing and challenging landscape. While I handle the day to day and monthly operations of the Capital Area SQL Server User Group, Ed handles the planning and operations of the SQL Saturday event. While the event itself is only 1 day of the year, I suspect he has the harder job!

This year of course planning was complicated by the fact that the event had to become a virtual event. However, it’s a bit ironic we went virtual because in many ways, the Capital District of NY is probably one of the safer places in the country to have an in-person event. That said, virtual was still by far the right decision.

Lessons Learned

Since more and more SQL Saturdays will be virtual for the foreseeable future, I wanted to take the opportunity to pass on some lessons I learned and some thoughts I have about making them even more successful. Just like the #SQLFamily in general passing on knowledge about SQL Server, I wanted to pass on knowledge learned here.

For Presenters

The topic I presented on was So you want to Present: Tips and Tricks of the Trade. I think it’s important to nurture the next generation of speakers. Over the years I was given a great deal of encouragement and advice from the speakers who came before me and I feel it’s important to pass that on. Normally I give this presentation in person. One of the pieces of advice I really stress in it is to practice beforehand. I take that to heart. I knew going into this SQL Saturday that presenting this remotely would create new challenges. For example, on one slide I talk about moving around on the stage. That doesn’t really apply to virtual presentations. On the other hand, when presenting them in person, I generally don’t have to worry about a “green-screen”. (Turns out for this one I didn’t either, more on that in a moment.)

So I decided to make sure I did a remote run through of this presentation with a friend of mine. I can’t tell you how valuable that was. I found that slides I thought were fine when I practiced by myself didn’t work well when presented remotely. I found that the lack of feedback inhibited me at points (I actually do mention this in the original slide deck). With her feedback, I altered about a 1/2 dozen slides and ended up adding 3-4 more. I think this made for a much better and more cohesive presentation.

Tip #1: Practice your virtual presentation at least once with a remote audience

They don’t have to know the topic or honestly, even have an interest in it. In fact I’d argue it might help if they don’t, this means they can focus more on the delivery and any technical issues than the content itself. Even if you’ve given the talk 100 times in front of a live audience, doing it remotely is different enough that you need feedback.

Tip #2: Know your presentation tool

This one actually came back to bite me and I’m going to have another tip on this later. I did my practice run via Zoom, because that’s what I normally use. I’m used to the built-in Chroma Key (aka green-screen) feature and know how to turn it on and off and to play with it. It turns out that GotoWebinar handles it differently and I didn’t even think about it until I got to that part of my presentation and realized I had never turned it on, and had no idea how to! This meant that this part of my talk didn’t go as well as planned.

Tip #3: Have a friend watch the actual presentation

I actually lucked out here, both my kids got up early (well for them, considering it was a weekend) and watched me present. I’m actually glad I didn’t realize this until the very end or else I might have been more self-conscious. That said, even though I had followed Tip #1 above, they were able to give me more feedback. For example, (and this relates to Tip #2), the demo I did using Prezi was choppy and not great. In addition, my Magnify Screen example that apparently worked in Zoom, did not work in GotoWebinar! This feedback was useful. But even more so, if someone you know and trust is watching in real-time, they can give real-time feedback such as issues with bandwidth, volume levels, etc.

Tip #4: Revise your presentation

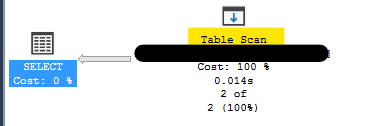

Unless your presentation was developed exclusively to be done remotely, I can guarantee that it probably need some changes to make it work better remotely. For example, since most folks will be watching from their computer or phone, you actually may NOT need to magnify the screen such as you would in a live presentation with folks sitting in the back of the room. During another speaker’s presentation, I realized they could have dialed back the magnification they had enabled in SSMS and it would have still been very readable and also presented more information.

You also can’t effectively use a laser pointer to highlight items on the slide-deck.

You might need to add a few slides to better explain a point, or even remove some since they’re no longer relevant. But in general, you can’t just shift and lift a live presentation to become a remote one and have it be as good.

Tip #5: Know your physical setup

This is actually a problem I see at times with in-person presentations, but it’s even more true with virtual ones and it ties to Tip #2 above. If you have multiple screens, understand which one will be shown by the presentation tool. Most, if not all, let you select which screen or even which window is being shared. This can be very important. If you choose to share a particular program window (say PowerPoint) and then try to switch to another window (say SSMS) your audience may not see the new window. Or, and this is very common, if you run PowerPoint in presenter mode where you have the presented slides on one screen, and your thumbnails and notes on another, make sure you know which screen is being shared. I did get this right with GotoWebinar (in part because I knew to look for it) but it wasn’t obvious at first how to do this.

In addition, decide where to put your webcam! If you’re sharing your face (and I’m a fan of it, I think it makes it easier for others to connect to you as a presenter) understand which screen you’ll be looking at the most, otherwise your audience may get an awkward looking view of you always looking off to another screen. And, if you can, try to make “eye contact” through the camera from time to time. In addition, be aware, and this is an issue I’m still trying to address, that you may have glare coming off of your glasses. For example, I need to wear reading glasses at my computer, and even after adjusting the lighting in the room, it became apparent, that the brightness of my screens alone was causing a glare problem. I’ll be working on this!

Also be aware of what may be in the background of your camera. You don’t want to have any embarrassing items showing up on your webcam!

For Organizers

Tip #6: Provide access to the presentation tool a week beforehand

Now, this is partly on me. I didn’t think to ask Ed if I could log into one of the GotoWebinar channels beforehand, I should have. But I’ll go a step further. A lesson I think we learned is that as an organizer, make sure presenters can log in before the big day and that they can practice with the tool. This allows them to learn all the controls before they go live. For example, I didn’t realize until 10 minutes was left in my presentation how to see who the attendees were. At first I could only see folks who had been designated as a panelist or moderator, so I was annoyed I couldn’t see who was simply attending. Finally I realized what I thought was simply a label was in fact a tab I could click on. Had I played with the actual tool earlier in the week I’d have known this far sooner. So organizers, if you can, arrange time for presenters to log in days before the event.

Tip #7: Have plenty of “Operators”

Every tool may call them by different names but ensure that you have enough folks in each “room” or “channel” who can do things like mute/unmute people, who can ensure the presenter can be heard, etc. When I started my presentation, there was some hitch and there was no one around initially to unmute me. While I considered doing my presentation via interpretive dance or via mime, I decided to not to. Ed was able to jump in and solve the problem. I ended up losing about 10 minutes of time due to this glitch.

Tip #8: Train your “Operators”

This goes back to the two previous tips, make sure your operators have training before the big day. Setup an hour a week before and have them all log in and practice how to unmute or mute presenters, how to pass control to the next operator, etc. Also, you may want to give them a script to read at the start and end of each session. “Good morning. Thank you for signing in. The presenter for this session will be John Doe and he will be talking about parameter sniffing in SQL Server. If you have a question, please enter it in the Q&A window and I will make sure the presenter is aware of it. This session is/isn’t being recorded.” At the end a closing item like, “Thank you for attending. Please remember to join us in Room #1 at 4:45 for the raffle and also when this session ends, there will be a quick feedback survey. Please take the time to fill it out.”

Tip #9: If you can, have a feedback mechanism

While people often don’t fill out the written feedback forms at a SQL Saturday, when they do, they can often be valuable. Try to recreate this for virtual ones.

Tip #10: Have a speaker’s channel

I hadn’t given this much thought until I was talking to a fellow speaker, Rie Irish later, and remarked how I missed the interaction with my fellow speakers. She was the one who suggested a speaker’s “channel” or “room” would be a good idea and I have to agree. A private room where speakers can log in, chat with each other, reach out to operators or organizers strikes me as a great idea. I’d highly suggest it.

Tip #11: Have a general open channel

Call this the “hallway” channel if you want, but try to recreate the hallway experience where folks can simply chat with each other. SQL Saturday is very much a social event, so try to leverage that! Let everyone chat together just like they would at an in-person SQL Saturday event.

For Attendees

Tip #12: Use social media

As a speaker or organizer, I love to see folks talking about my talk or event on Twitter and Facebook. Please, share the enthusiasm. Let others know what you’re doing and share your thoughts! This is actually a tip for everyone, but there’s far more attendees than organizers/speakers, so you can do the most!

Tip #13: Ask questions, provide feedback

Every platform used for remote presentations offers some sort of Q&A or feedback. Please, use this. As a virtual speaker, it’s impossible to know if my points are coming across. I want/welcome questions and feedback, both during and after. As great as my talks are, or at least I think they are, it’s impossible to tell without feedback if they’re making an impact. That said, let me apologize right now, if during my talk you tried to ask a question or give feedback, because of my lack of familiarity with the tool and not having the planned operator in the room, I may have missed it.

Tip #14: Attend!

Yes, this sounds obvious, but hey, without you, we’re just talking into a microphone! Just because we can’t be together in person doesn’t mean we should stop learning! Take advantage of this time to attend as many virtual events as you can! With so many being virtual, you can pick ones out of your timezone for example to better fit your schedule, or in different parts of the world! Being physically close is no longer a requirement!

In Closing

Again, I want to reiterate that Ed and his team did a bang-up job with our SQL Saturday and I had a blast and everyone I spoke to had a great time. But of course, doing events virtually is still a new thing and we’re learning. So this is an opportunity to take the lessons from a great event and make yours even better!

I had a really positive experience presenting virtually and look forward to my PASS Summit presentation and an encouraged to put in for more virtual SQL Saturdays after this.

In addition, I’d love to hear what tips you might add.